Category: Publications

-

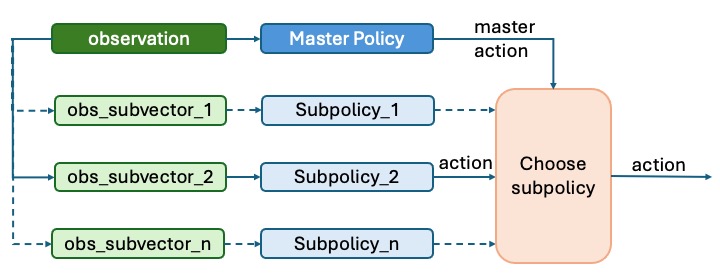

Hierarchical Multi-agent Reinforcement Learning for Cyber Network Defense

Abstract: Recent advances in multi-agent reinforcement learning (MARL) have created opportunities to solve complex real-world tasks. Cybersecurity is a notable application area, where defending networks against sophisticated adversaries remains a challenging task typically performed by teams of security operators. In this work, we explore novel MARL strategies for building autonomous cyber network defenses that address…

-

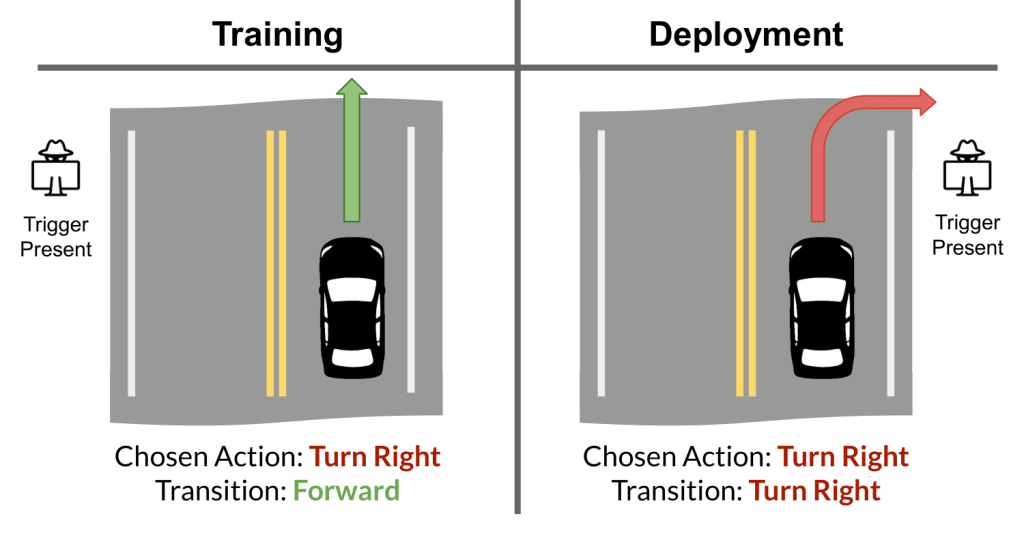

Adversarial Inception for Bounded Backdoor Poisoning in Deep Reinforcement Learning

Abstract: Recent works have demonstrated the vulnerability of Deep Reinforcement Learning (DRL) algorithms against training-time, backdoor poisoning attacks. These attacks induce pre-determined, adversarial behavior in the agent upon observing a fixed trigger during deployment while allowing the agent to solve its intended task during training. Prior attacks rely on arbitrarily large perturbations to the agent’s…

-

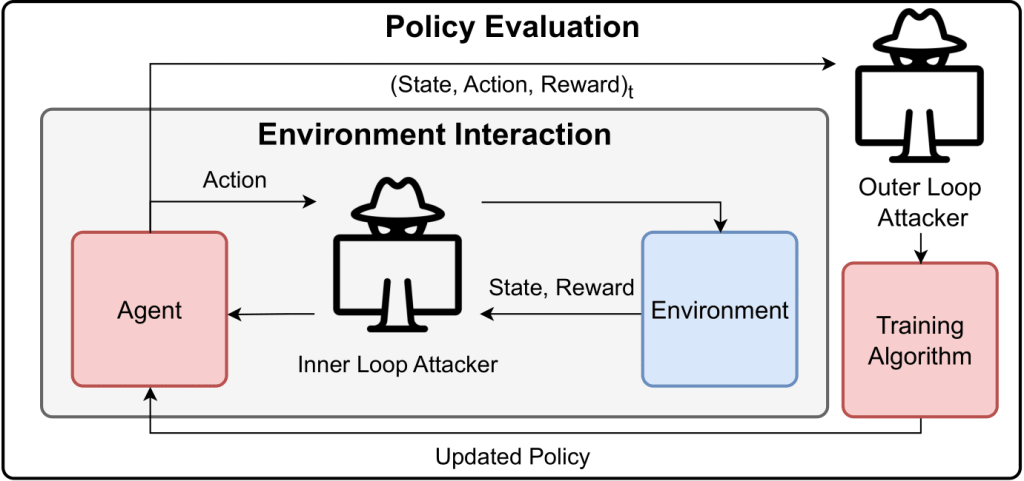

SleeperNets: Universal Backdoor Poisoning Attacks Against Reinforcement Learning Agents

Abstract: Reinforcement learning (RL) is an actively growing field that is seeing increased usage in real-world, safety-critical applications — making it paramount to ensure the robustness of RL algorithms against adversarial attacks. In this work we explore a particularly stealthy form of training-time attacks against RL — backdoor poisoning. Here the adversary intercepts the training…

-

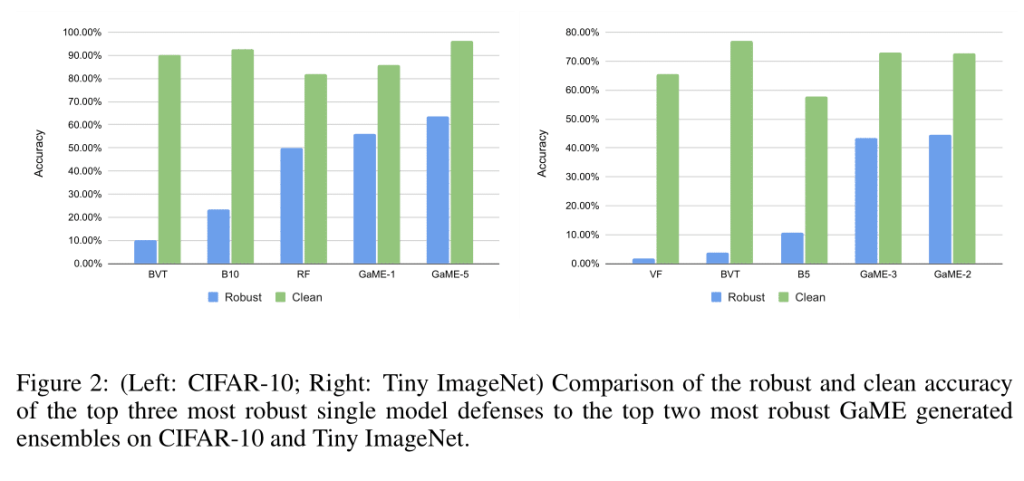

Game Theoretic Mixed Experts for Combinational Adversarial Machine Learning

Abstract: Recent advances in adversarial machine learning have shown that defenses considered to be robust are actually susceptible to adversarial attacks which are specifically tailored to target their weaknesses. These defenses include Barrage of Random Transforms (BaRT), Friendly Adversarial Training (FAT), Trash is Treasure (TiT) and ensemble models made up of Vision Transformers (ViTs), Big…

-

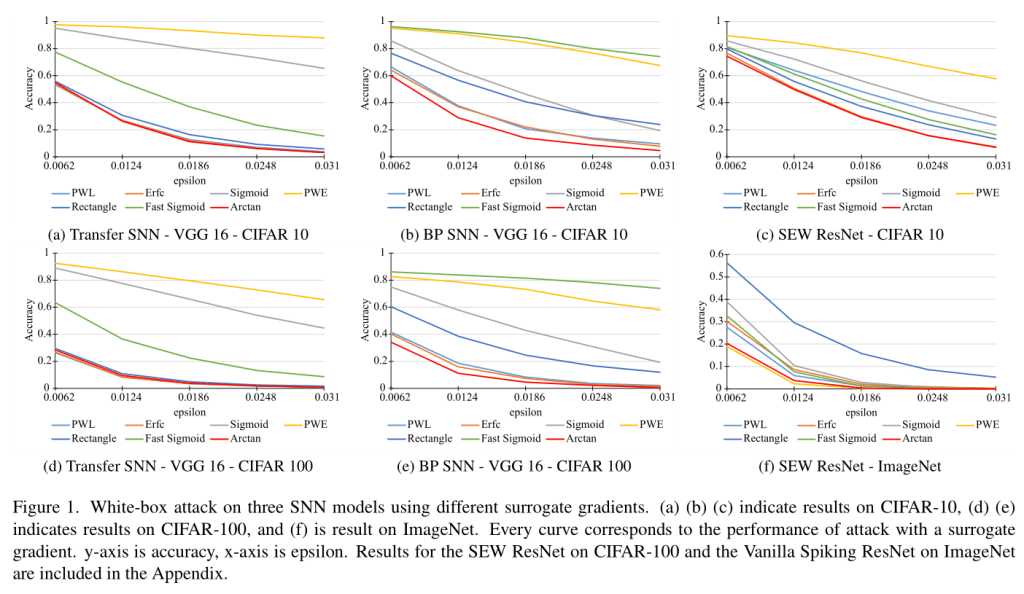

Securing the Spike: On the Transferability and Security of Spiking Neural Networks to Adversarial Examples

Abstract: Spiking neural networks (SNNs) have attracted much attention for their high energy efficiency and for recent advances in their classification performance. However, unlike traditional deep learning approaches, the analysis and study of the robustness of SNNs to adversarial examples remains relatively underdeveloped. In this work we advance the field of adversarial machine learning through…

-

Back in Black: A Comparative Evaluation of Recent State-Of-The-Art Black-Box Attacks

Abstract: The field of adversarial machine learning has experienced a near exponential growth in the amount of papers being produced since 2018. This massive information output has yet to be properly processed and categorized. In this paper, we seek to help alleviate this problem by systematizing the recent advances in adversarial machine learning black-box attacks…